Introduction

The AI industry has experienced explosive growth over the last two years. Developers, startups, and enterprises adopted AI tools at record speed because pricing felt simple, affordable, and scalable. Monthly subscriptions made advanced coding assistants, automation tools, and AI agents accessible to teams of every size. Organizations quickly integrated these tools into their daily workflows for code generation, debugging, documentation, automation, and operational support. As adoption increased, many users assumed these low prices would remain stable for the long term. That assumption is now being challenged.

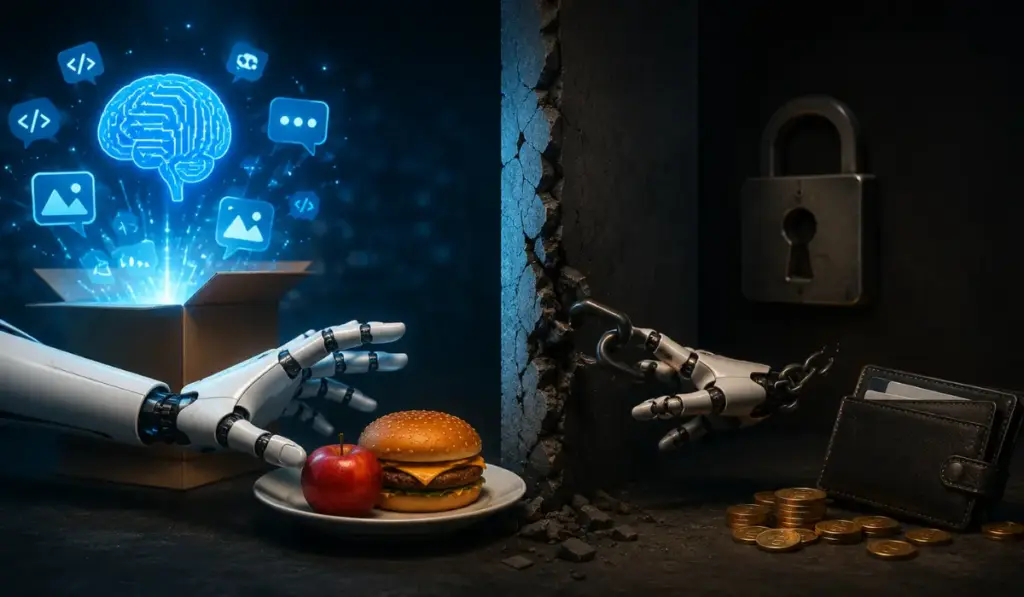

Recent pricing updates from major AI providers show that the cheap AI era is ending. Anthropic’s Claude Code pricing changes have become one of the strongest signals of this shift. Programmatic access that once felt generous is now moving toward structured API credits and tighter usage controls. This is not an isolated event. It reflects the broader economic reality of AI infrastructure. Running advanced AI systems requires enormous compute power, expensive hardware, and continuous operational investment. AI companies cannot continue subsidizing heavy users forever while maintaining sustainable business models.

This shift matters because businesses are no longer simply paying for productivity software. In many cases, they are building operational dependency on rented AI infrastructure. When pricing changes suddenly, businesses that rely heavily on these tools lose predictability and control. Developers, founders, and technology leaders must now rethink how AI fits into long-term business strategy.

The AI Subsidy Era Was Never Meant to Last

Cheap AI subscriptions played an important role in accelerating adoption, but they were never designed to remain permanent. Frontier AI companies needed rapid market expansion, so their goal was simple: attract developers, encourage experimentation, and build strong workflow habits before competitors dominated the market. Affordable subscriptions reduced friction and made adoption easier. This strategy worked extremely well, allowing millions of developers to integrate AI into coding, research, support, and automation workflows.

However, this model had an obvious weakness. Large language models are extremely expensive to operate. Training costs are massive, but inference costs also remain high. Every user interaction requires compute resources, and heavy users running automation agents, background tasks, code generation loops, and large-scale workflows consume significantly more resources than casual users. This creates an imbalance that flat subscription pricing cannot support indefinitely.

For a while, investor funding covered the gap. Venture capital allowed AI companies to prioritize growth over profitability, which created the illusion that advanced AI could remain permanently affordable through simple monthly pricing. That was never realistic. Eventually, these companies needed to shift toward sustainable economics. The market is now entering that stage.

What Claude Code Pricing Changes Really Mean

Anthropic’s Claude Code changes have attracted major attention because they clearly illustrate the industry transition. Previously, many developers benefited from programmatic access models that effectively operated under hidden subsidies. Automation-heavy workflows, SDK integrations, GitHub Actions, and terminal-based usage all benefited from generous subscription structures that made large-scale AI usage surprisingly affordable.

That model is now changing. Anthropic has introduced dedicated API credits for programmatic users based on subscription tiers. Instead of broad usage flexibility, developers now operate within defined monthly limits. This introduces cost accountability in a much more visible way.

For occasional users, this may not create major disruption. However, for heavy users, the impact is far more significant. Intensive coding sessions and automation workflows can consume credits quickly. Teams that previously enjoyed predictable subscription costs now face a more usage-sensitive pricing structure. This forces businesses to evaluate the real economics of AI usage rather than relying on hidden subsidies.

This shift is not only about Anthropic. It reflects a broader industry movement. AI companies are moving away from growth-focused pricing models and toward sustainable revenue structures. Developers should treat this as an early signal of what is likely coming across the wider AI ecosystem.

AI Pricing Is Becoming More Complex

One of the biggest frustrations developers now face is pricing complexity. During the early adoption phase, AI pricing was intentionally simple. Flat monthly subscriptions reduced decision friction and allowed teams to experiment freely without financial uncertainty.

Modern AI pricing is becoming far more layered. Subscriptions now combine API credits, token billing, model-specific costs, rate limits, and usage caps. This creates uncertainty and makes forecasting more difficult. Teams can no longer estimate AI costs as easily as they once did, which creates hesitation around scaling AI-heavy workflows.

This matters because predictability is essential for business planning. Developers want to automate confidently without worrying about surprise expenses. Finance teams need budget stability, while CTOs require operational clarity. Complex pricing weakens all three.

This pricing evolution follows a familiar pattern. Cloud computing experienced a similar journey. Early cloud adoption emphasized simplicity and accessibility, but over time pricing became increasingly granular and difficult to forecast. AI is now following the same path. As this happens, organizations must adopt stronger cost management discipline because AI expenses can no longer be treated casually or grouped alongside ordinary SaaS subscriptions.

Enterprises Are Already Feeling Budget Pressure

Reports suggesting that companies such as ServiceNow and Uber exhausted AI coding budgets earlier than expected highlight an important reality. Even large enterprises are struggling to forecast AI spending accurately.

This should concern smaller organizations even more. Large enterprises typically have procurement teams, financial oversight, vendor management systems, and budget controls. If these organizations are facing challenges managing AI costs, startups and smaller businesses face even greater exposure.

Many companies still treat AI as a software expense, but this mindset is outdated. AI increasingly behaves like infrastructure because costs scale directly with usage. The more deeply AI integrates into workflows, the greater the exposure to unpredictable spending.

This changes financial planning entirely. Businesses must now implement AI governance, monitor usage patterns, establish alerts, and create spending policies. Without these safeguards, AI costs can quickly become difficult to control and may create operational risk.

Vendor Lock-In Is Becoming a Serious Business Risk

Pricing changes have also exposed another issue many teams ignored during rapid adoption: vendor dependency. As businesses integrated AI tools deeply into development workflows, customer operations, and automation systems, switching costs increased significantly.

Pricing is only one part of the risk. Businesses also face API changes, model deprecations, downtime risks, policy changes, and evolving data handling requirements. If a third-party AI provider becomes critical infrastructure, your business inherits risks that remain outside your control.

This is why strategic technology planning matters. A skilled fractional cto understands that infrastructure dependency should always be evaluated before workflows become too difficult or expensive to migrate. By the time businesses are forced to react, migration costs are often much higher than initially expected.

Chasing the Next Cheap Tool Is Not a Long-Term Strategy

When pricing frustration happens, many developers immediately begin searching for the next cheap alternative. This reaction is understandable, but it rarely solves the underlying problem because it simply repeats the same cycle.

A cheap tool attracts users, dependency grows, pricing changes, and users migrate elsewhere. Then the pattern repeats again with another platform.

This cycle creates hidden operational costs. Teams must evaluate alternatives, test workflows, retrain developers, rebuild integrations, and absorb productivity losses during migration. These costs are often underestimated and can outweigh any short-term subscription savings.

A stronger long-term strategy focuses on reducing dependency rather than constantly chasing temporary pricing advantages.

Local AI Is Becoming a Practical Alternative

Local AI was previously dismissed by many teams because hardware requirements seemed unrealistic. That situation is changing quickly. Hardware has improved, open-source models have become more capable, and inference speeds have increased significantly.

This makes local AI a much more practical option for developers and businesses. Running local models offers predictable economics because hardware becomes an upfront investment rather than an endless subscription cost. Sensitive code and business data remain private. Teams avoid API limitations, vendor pricing surprises, and dependency on external infrastructure decisions.

Businesses should now compare local AI not only on benchmark quality but also on ownership economics, privacy advantages, workflow stability, and long-term cost control. As cloud AI pricing rises, local infrastructure becomes easier to justify.

AI Must Be Treated as Infrastructure

The most important mindset shift is simple: AI is no longer just software. For many businesses, AI is becoming operational infrastructure. Infrastructure should be predictable, auditable, reliable, and controllable. Subscription dependency weakens all of these qualities.

If AI powers coding workflows, automation systems, customer operations, or internal tools, ownership matters. This does not mean every business should abandon cloud AI immediately because cloud tools still offer meaningful value. However, critical workflows should not rely entirely on rented intelligence.

Technology leaders should ask practical questions about pricing exposure, API dependency, and operational resilience. If pricing doubles, limits tighten, or APIs change unexpectedly, businesses need architectures that can absorb those shocks.

Conclusion

The AI free lunch era is ending. Cheap subscriptions accelerated adoption, but they were never designed to last forever. Rising compute costs, growing automation workloads, and infrastructure realities are pushing AI companies toward sustainable pricing models.

This is not bad news. It is simply market maturity. The real lesson is strategic. Businesses should not build critical workflows on the assumption of permanently cheap rented intelligence. Developers, founders, and every fractional cto must now evaluate AI dependency, vendor exposure, and infrastructure resilience more carefully.

Organizations that act early will gain stronger cost control, better operational stability, and greater long-term flexibility. As AI becomes core infrastructure, ownership and strategic planning matter more than temporary subscription convenience. Businesses exploring smarter AI implementation, stronger infrastructure control, and better system integration strategies are increasingly turning toward solutions-driven companies like startuphakk to prepare for the next phase of AI adoption.