Introduction

Artificial intelligence is changing how software teams build products. For years, most developers believed that if you wanted powerful AI, you had to pay for expensive cloud subscriptions. Monthly fees, API pricing, and usage restrictions became normal business expenses. Companies accepted these costs because advanced AI models were only available through cloud providers.

That assumption is now changing rapidly. Open-source local AI models have improved at an incredible pace, and models like Qwen3.6-27B are now delivering benchmark results that come surprisingly close to premium frontier models. The major difference is cost and control. These models can run on consumer hardware without monthly subscriptions or token-based billing.

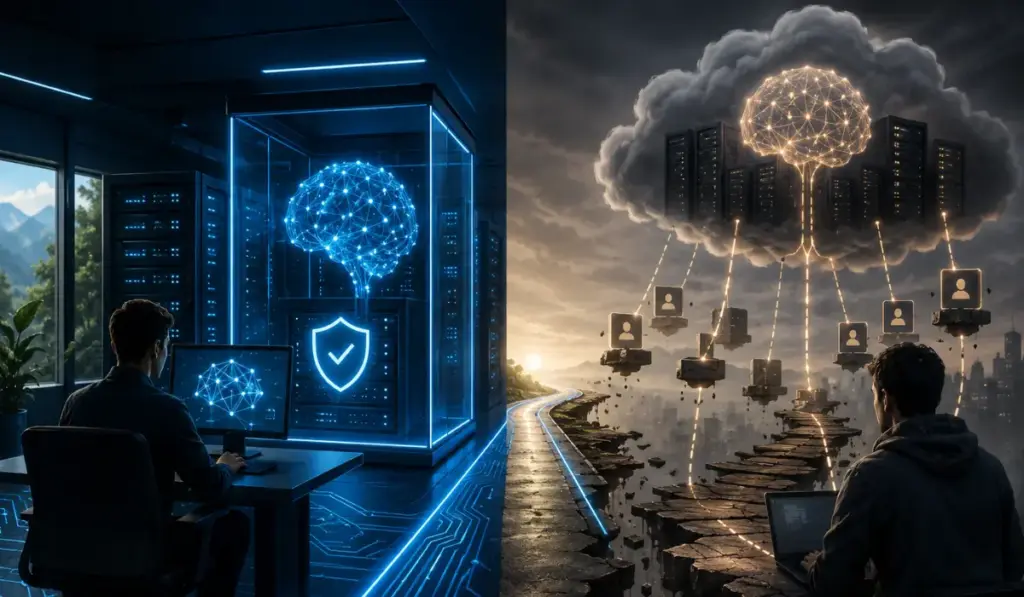

This shift matters for developers, startups, and engineering teams trying to manage AI costs while protecting their data. The conversation is no longer about whether local AI is useful. The real question is simple: why keep renting intelligence when you can own it? Cloud AI tools offer convenience, but they also introduce recurring expenses, vendor dependency, and growing data exposure risks.

For teams working with proprietary code, internal systems, or client-sensitive workflows, these trade-offs matter even more. Running models locally changes the economics completely. AI stops being another software subscription and starts becoming infrastructure your business owns. This is why many technical leaders, including organizations working with a fractional CTO, are rethinking how AI fits into long-term technical architecture.

The Gap Between Local and Frontier AI Has Collapsed

For years, closed AI models dominated the industry conversation. The message was simple: if you wanted strong reasoning, advanced coding support, and reliable agentic workflows, you had to pay for cloud models from major vendors. Local models were often treated as weaker alternatives designed mainly for hobbyists or researchers.

That gap has narrowed significantly. Qwen3.6-27B recently posted strong results on SWE-bench Verified, a benchmark designed to test how well models solve real software repository issues. Unlike simple code completion tests, this benchmark focuses on practical engineering work. The results showed Qwen3.6-27B performing surprisingly close to premium frontier models.

This is not a minor improvement. It changes the economics of AI adoption because developers can now run highly capable models locally without sacrificing as much performance as before. Another important benchmark is Terminal-Bench 2.0, which focuses on agentic coding workflows. It measures command execution, file editing, autonomous reasoning, and multi-step problem solving inside real terminal environments.

Strong performance in these benchmarks suggests local AI is becoming practical for real engineering tasks. This is a major shift for software teams because local AI is no longer an experimental option. It is becoming a serious operational choice for teams building production software, automation pipelines, and AI-assisted workflows.

Benchmark Numbers Still Need Context

Benchmark numbers are useful, but they never tell the full story. Model vendors often publish benchmark scores using optimized internal environments. These setups may include custom tools, specialized scaffolding, or carefully tuned workflows designed to maximize performance.

As a result, published scores do not always reflect production behavior. Real software development is much messier. Developers deal with incomplete requirements, unclear documentation, technical debt, legacy systems, and unexpected bugs. Production engineering is rarely as clean as benchmark environments.

This is why independent testing matters. A model may perform well in launch benchmarks but still struggle in real workflows. Teams should test models using their own repositories, internal tools, and actual workflows before making infrastructure decisions.

Practical questions matter more than headline numbers. Can the model understand your codebase? Can it handle longer tasks reliably? Does it integrate well with your development environment? Experienced engineering leaders understand this pattern well because software history is full of tools that looked great in demos but underperformed in production.

Where Local Models Already Deliver Value

Local models do not need to outperform frontier models in every category to be useful. They only need to perform well in workflows that matter most. For many teams, that threshold has already been reached.

Local models are becoming highly effective for code generation, debugging, refactoring, documentation support, bug analysis, and internal tooling automation. These are repeated workflows across nearly every software team. When these tasks run through cloud APIs, costs increase quickly because every interaction contributes to usage.

With local deployment, marginal cost drops close to zero. This changes developer behavior significantly. Teams can run longer sessions, experiment more freely, and build agentic workflows without worrying about token limits or monthly billing.

This freedom creates a healthier engineering environment. Developers stop optimizing around API costs and start optimizing around outcomes. That shift encourages experimentation, automation, and deeper workflow integration.

The Hidden Cost of Cheap AI Subscriptions

Cheap AI subscriptions look attractive on the surface. A low monthly fee feels like an easy productivity investment. Many developers see it as a small operational expense.

However, low-cost AI often comes with trade-offs that many teams overlook. Cloud-based AI tools process massive amounts of developer interaction data. This can include prompts, code snippets, debugging workflows, repositories, and iterative problem-solving behavior.

For many providers, this data is highly valuable. Developer workflows reveal how real engineers solve practical problems, and that information can improve future models significantly. This creates an uncomfortable business reality where the subscription fee may not be the most valuable part of the transaction.

In some cases, the real asset is the engineering data itself. This matters even more for teams working with proprietary software, internal systems, custom algorithms, or client-sensitive projects. Uploading this information to external services introduces strategic risk.

Not every provider behaves the same way, but businesses should understand the incentives behind cheap AI offerings before building critical workflows around them.

Cloud AI Creates Dependency Risks

Subscription AI also creates operational dependency. At first, this feels harmless. A team adopts an API, productivity improves, and workflows become integrated into daily development processes.

Over time, dependency grows. Then limitations appear. Pricing changes, usage caps increase, rate limits appear, and policies evolve. Suddenly, important workflows depend on systems outside your control.

This is not a new pattern in software. The industry has seen similar dependency cycles with SaaS platforms, cloud providers, and vendor ecosystems for decades. AI is following the same path.

Owning more of your AI stack reduces these risks. Local deployment gives teams greater control over availability, deployment strategy, privacy, and long-term architecture. This creates stronger operational resilience.

The Economics of Running Local AI

Cost is one of the strongest arguments for local AI adoption. Running capable local models requires an upfront hardware investment, which may initially feel expensive.

However, recurring AI costs grow quickly. Cloud AI pricing often includes monthly subscriptions, API fees, token billing, premium tiers, and usage scaling. For teams running agentic coding workflows heavily, these expenses can rise rapidly.

Local deployment changes this equation completely. After setup, ongoing costs are dramatically lower. There are no token charges, no subscription limits, and no surprise invoices.

This creates more predictable infrastructure spending. For startups and growing engineering teams, predictable costs improve operational flexibility and reduce budget pressure.

Why Local Models Work Better for Agentic Coding

Agentic coding is more demanding than simple chatbot interactions. It requires models to read files, run commands, edit code, analyze outputs, and iterate repeatedly until tasks are complete.

These workflows generate heavy usage. Running them through cloud APIs introduces three major problems: cost, latency, and data exposure.

Local deployment reduces all three. Latency improves because requests stay local. Costs drop because token billing disappears. Privacy improves because code remains inside your environment.

For engineering teams building autonomous workflows, this is highly valuable. As agentic development becomes more common, infrastructure decisions matter more. The economics increasingly favor local deployment.

Conclusion

Local AI models are no longer a niche experiment or a budget alternative. They are becoming a serious strategic option for developers, startups, and software teams.

Performance gaps are shrinking, hardware requirements are becoming more accessible, and operational economics are improving rapidly. Most importantly, local deployment gives businesses something cloud subscriptions cannot fully provide: control.

Businesses gain control over costs, privacy, deployment, and long-term architecture. The smartest strategy is not to reject cloud AI completely because frontier models still offer value in certain workflows. The better approach is to use cloud AI intentionally while building stronger local capabilities wherever ownership matters.

This is the shift shaping the next phase of AI adoption. Companies that treat AI as infrastructure instead of a subscription dependency will likely have greater flexibility in the years ahead. Businesses looking to integrate AI, connect internal systems, or design scalable automation workflows should start thinking beyond monthly subscriptions and toward long-term infrastructure ownership.

That is exactly why startuphakk focuses on building custom software and infrastructure solutions that help businesses move toward AI systems they can truly control.