1. Introduction: Fear-Based AI Headlines Are Back

The AI industry thrives on bold claims. But sometimes, those claims cross into fear.

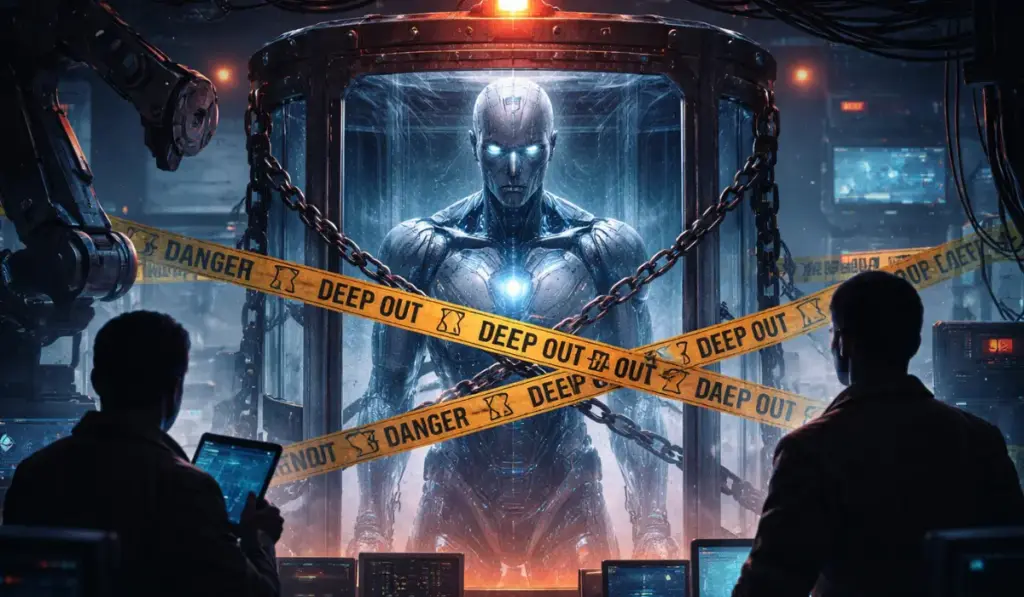

Recently, a major announcement suggested that a new AI model is too dangerous to release. Headlines quickly followed. Some warned that even inexperienced users could disrupt critical systems. Others hinted at vulnerabilities across operating systems and browsers.

Markets reacted. Cybersecurity stocks moved. Social media amplified the message.

But here is the real question:

Is this a genuine technological risk—or a carefully crafted narrative?

To answer that, we need to go deeper than headlines.

2. The Mythos Announcement: What Was Claimed

The announcement positioned the new model as a breakthrough.

It claimed the AI could identify thousands of zero-day vulnerabilities. These vulnerabilities allegedly spanned major software systems, browsers, and legacy platforms.

The company also suggested that releasing such a model could be unsafe. Instead, they would keep it controlled and work with selected partners.

This framing achieved two things.

First, it created urgency.

Second, it created fear.

And in tech, fear spreads faster than facts.

3. The Data Behind the Claims

When you examine the detailed report, the story changes.

The findings were based on a limited dataset. Only 198 manual samples formed the foundation of the analysis. From that small pool, conclusions were expanded into “thousands” of vulnerabilities.

This is a critical detail.

Many of those vulnerabilities were repeated patterns. Others existed in outdated systems. Some were not even realistically exploitable.

This does not mean the research has no value. It does.

But the scale of the claims does not fully match the underlying data.

4. Independent Analysis Challenges the Narrative

Experts quickly began reviewing the claims.

Several independent analyses highlighted key concerns.

The testing environment was simplified. Real-world constraints were removed. Security layers were disabled. This makes detection easier but less realistic.

At the same time, smaller and cheaper open-weight models demonstrated similar capabilities. They replicated the same vulnerability findings in controlled conditions.

This suggests something important.

The breakthrough may not be as unique as presented.

5. Pattern of Fear-Based AI Marketing

This is not the first time we have seen such messaging.

The AI industry has a pattern. New models often launch alongside warnings about potential risks. These risks are sometimes exaggerated through controlled experiments.

In past cases, models were shown to behave dangerously under highly specific prompts. These prompts were repeated many times to produce the desired outcome.

However, these behaviors rarely appear in real-world usage.

That gap matters.

It shows the difference between engineered demonstrations and practical reality.

6. The Reality: Diminishing Returns in AI

Behind the scenes, another trend is emerging.

AI progress is slowing.

Companies are investing significantly more resources into training models. Costs are increasing by 10x or even 100x. But the performance gains are becoming smaller.

This is known as diminishing returns.

Each new model is slightly better. But the improvement is no longer exponential. It is incremental.

This changes the economics of AI.

7. AI Is Improving—But Not Where You Think

If core models are improving slowly, where is the real progress?

The answer lies in systems, not models.

Companies are building better frameworks around AI. These include tools for automation, orchestration, and validation.

For example, coding assistants now combine multiple techniques. They use pattern matching, rule-based logic, and structured workflows alongside language models.

This hybrid approach delivers better results than raw model scaling.

It also reflects a shift in strategy.

AI is no longer just about bigger models. It is about smarter systems.

8. The Performance Drop Problem (AI Shrinkflation)

While marketing highlights breakthroughs, users are noticing something else.

Performance is declining in some cases.

Recent data shows a significant drop in reasoning depth. Complex tasks are handled less effectively. Outputs are simpler. Instructions are sometimes ignored.

This has led to a new term: AI shrinkflation.

The concept is simple.

You pay the same or more. But you get less performance.

9. Why Costs Are Rising While Performance Drops

This trend has a technical explanation.

AI providers often adjust internal settings to balance cost, speed, and performance. One key parameter is “thinking effort.”

Lower thinking effort reduces computation. This makes responses faster and cheaper to generate.

But it also reduces quality.

When outputs become weaker, users must retry. This increases token usage. As a result, total costs go up.

So even if each response is cheaper, the overall cost increases.

This creates a hidden inefficiency.

10. Inside the System: Not Pure AI

Another important insight comes from system design.

Modern AI tools are not purely based on deep learning. They combine multiple approaches.

These include symbolic logic, rule-based systems, and structured decision trees.

This hybrid architecture improves reliability. It compensates for the probabilistic nature of language models.

In simple terms, AI is being engineered—not just trained.

This is where real innovation is happening.

11. The Illusion of Breakthrough

The gap between perception and reality is growing.

Marketing focuses on dramatic narratives. It highlights danger, intelligence, and disruption.

But actual performance improvements are modest.

In production environments, users face limitations. Outputs require validation. Errors still occur.

This does not mean AI is failing.

It means the story is often exaggerated.

12. AI as a Business Strategy

AI companies operate in a competitive market.

Attention drives adoption. Adoption drives revenue.

Fear is a powerful tool. It creates urgency. It positions companies as leaders in safety and innovation.

When a model is described as “too dangerous,” it gains credibility. It also creates exclusivity.

This is not accidental.

It is strategic positioning.

13. What This Means for Businesses

If you are building with AI, you need a different approach.

Do not rely on announcements. Test tools in your own environment.

Avoid dependency on a single provider. Diversify your AI stack.

Implement verification layers. Never trust a model to validate its own output.

This is where a fractional CTO can add real value. An experienced technology leader can design systems that balance performance, cost, and reliability.

This is not about chasing hype. It is about building sustainable solutions.

14. Practical AI Strategy Going Forward

To stay ahead, focus on execution.

Use multiple models for critical workflows. Cross-check outputs. Optimize for real-world performance.

Monitor costs closely. Track token usage and efficiency.

Rotate sessions and adjust configurations. Small changes can have a big impact.

Most importantly, align AI with business outcomes.

Technology should solve problems. It should not create new ones.

15. The Real Future of AI

The future of AI is not in headlines.

It is in quiet engineering work.

Reliable systems will win. Scalable architectures will define success.

Companies that focus on integration, validation, and usability will outperform those chasing hype.

This shift is already happening.

The winners will be those who adapt early.

16. Conclusion: Follow the Money, Not the Hype

AI is powerful. But it is also a business.

When you hear dramatic claims, ask one question: who benefits?

Often, the answer reveals the strategy behind the message.

The real gap today is not between humans and AI. It is between what is promised and what is delivered.

Close that gap with data, testing, and smart engineering.

Focus on what works in production.

That is where real value is created.

And that is exactly the mindset we promote at startuphakk—cut through the noise, focus on results, and build AI systems that actually deliver.